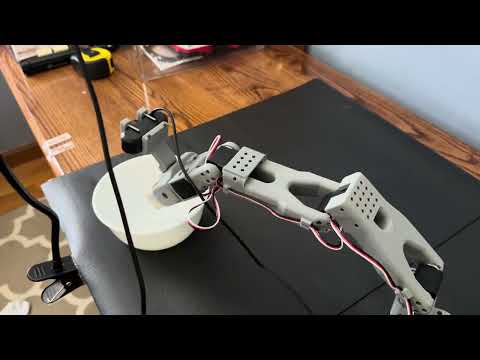

SO-ARM101 SmolVLA Finetuning

Motivation

Recently Vision-Language-Action models have been developed as a method for enabling robots to receive tasks in natural language, reason about the tasks and their environment, and perform these tasks for complex tasks. These models are implemented with a Vision-Language model that allows them to input both visual information about their environment, and receive natural language commands, with an additional action head which outputs a series of actions (joint states, joint velocities, etc…) that will allow the robot to complete the task. Many VLA models are available, including but not limited to OpenVLA, GrootN1.6, pi0.5, Gemini Robotics 1.5, and SmolVLA. I ultimately chose to use the SmolVLA model for this project, as it was designed specifically to run on consumer hardware while maintaining similar performance to other models.

Process and Results

In the following text, I am going to describe my experience, the method for dataset collection that worked best for me, and the various issues that I ran into. To finetune these models, a dataset of example tasks needs to be collected, typically with teleoperation, to allow the models to learn the actions necessary to complete the tasks with the specific robot. Once a dataset is collected, you must then finetune the model with that dataset, typically using at least one powerful GPU. In my case, as the SmolVLA is a smaller model meant to require less compute power, I finetuned it using a single A100 GPU on Google CoLab. The dataset I used consisted of 100 recorded episodes of a pick-place task with a wooden block into a bowl. A video showing the performance of my finetuned model is shown:

Although the model is not successful in all of the tasks, this was the best performing model of my three finetuning attempts.

The first model I trained using 60 episodes, with 5 distinct pick locations and 12 episodes per location. This was an initial test I wanted to do to learn about the process, without an expectation of this consistently working. I was following this page in the lerobot documentation. On this page it states that 5 distinct locations with 10 episodes each was what they used for good performance, however I found that my performance was very bad. After this, I collected a new dataset consisting of 100 episodes, split across 6 distinct locations spread across my entire workspace for the robot. Again, the performance of this model was not good. The third and final dataset I collected consisted of 100 episodes, however instead of placing the cube in the same 5 locations throughout the workspace, I reduced the workspace I was using to an about 20cm x 25cm area in front of the robot, and attempted to place the cube uniformly throughout the space. During this, I also tried to introduce rotational variations of the cube, resulting in the model somewhat learning to align the gripper’s orientation to the cube during the grasp. This resulted in a model that performs much better than the previous two, although the robot still fails grasps near the edges of the workspace often, and near the center of the workspace less often.